2 Комплекта Микрофон + Слушалка за Каска, Мотор, Ски и Други в Микрофони в гр. София - ID39213299 — Bazar.bg

2 Комплекта Микрофон + Слушалка за Каска, Мотор, Ски и Други в Микрофони в гр. София - ID39213299 — Bazar.bg

Каска 2 в 1 Слушалки Калъфи за високоговорители Микрофон Гъби Аксесоари за Q2 Q7/E6/E6 PLUS V6/V4 Интерком - Badu.bg

2 Комплекта Микрофон + Слушалка за Каска, Мотор, Ски и Други в Микрофони в гр. София - ID39213299 — Bazar.bg

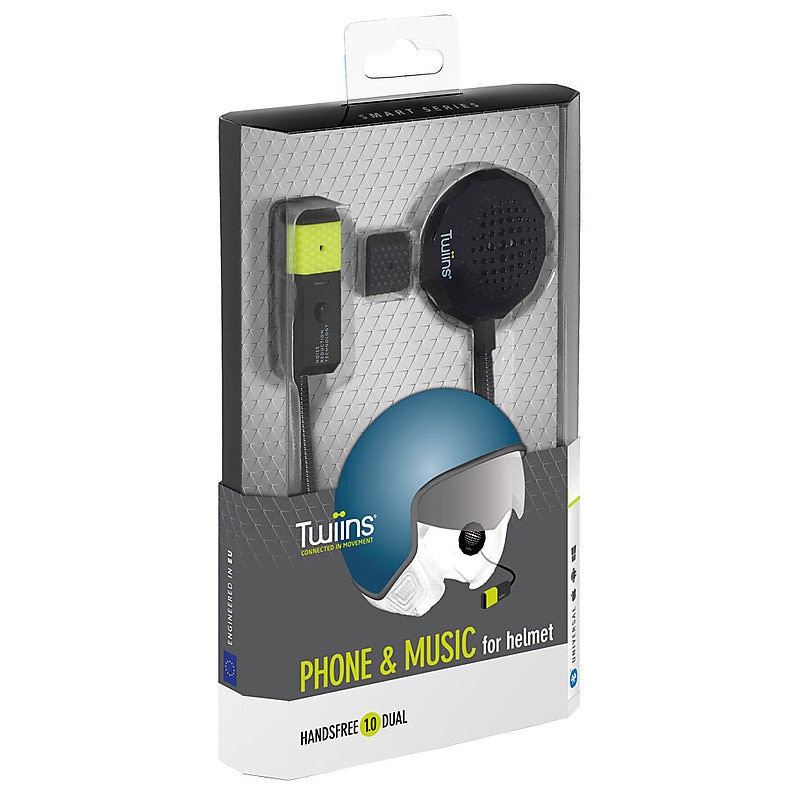

Блутут слушалки Хендсфри с микрофон за мотор мотоциклетна каска в Слушалки и портативни колонки в гр. Хасково - ID42622277 — Bazar.bg

Комплект слушалки и микрофон портативни станции Baofeng, Wouxun, Kenwood за слушалки за мотоциклети - eMAG.bg

Нова каска за мотоциклет езда хендсфри слушалки Bluetooth 5.0 мотор слушалки с микрофон костна проводимост аудио слушалки Разпродажба < Слушалки и слушалки в ушите \ Fabrika-Dostavka.today

Сгъваеми безжични Bluetooth слушалки с микрофон LED светлина Големи слушалки Каска за игри Стерео музикални слушалки За мобилен телефон Лаптоп - Badu.bg

2 елемента freedconn каска домофон мотоциклет bluetooth слушалки, аксесоари 2 в 1 микрофон високоговорител прилагат tcom sc tcom-os домофонна система ред | Отстъпка - www.galaxymart.net

3,5 мм жични геймърски слушалки PC бас стерео геймърски слушалки за PS4 Xbox One Switch Phone Лаптоп Слушалки Каска с микрофон - Badu.bg