Foodness BEVANDA D'ORZO BIO – съвместими с Caffitaly System®* – Доставка на кафе и кафе машини Caffitaly.bg

Espresso Collection Classico – алуминиеви капсули съвместими с Nespresso – Доставка на кафе и кафе машини Caffitaly.bg

Комплект от 2 кафе капсули, съвместими с Caffitaly, ново поколение, за многократно пълнене/перене, пластмасови, кафяв цвят, с четка и лъжица - eMAG.bg

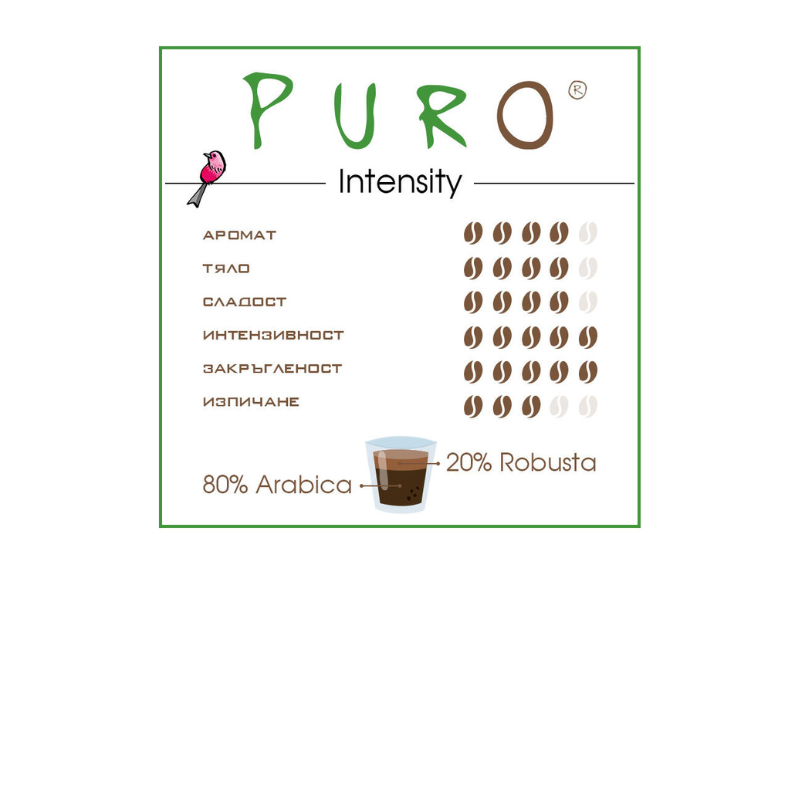

Кафе капсули PURO 4U Fairtrade, 96 бр. съвместими със системата "Caffitaly". > Cafissimo / Caffitaly

Best Moment SUPREMO – съвместими с NESCAFE'®* Dolce Gusto®* – Доставка на кафе и кафе машини Caffitaly.bg