Променен ЦСКА би плах Левски, но изпусна титлата: Червени картони, абсурдни грешки и нерви в дербито (ВИДЕО)

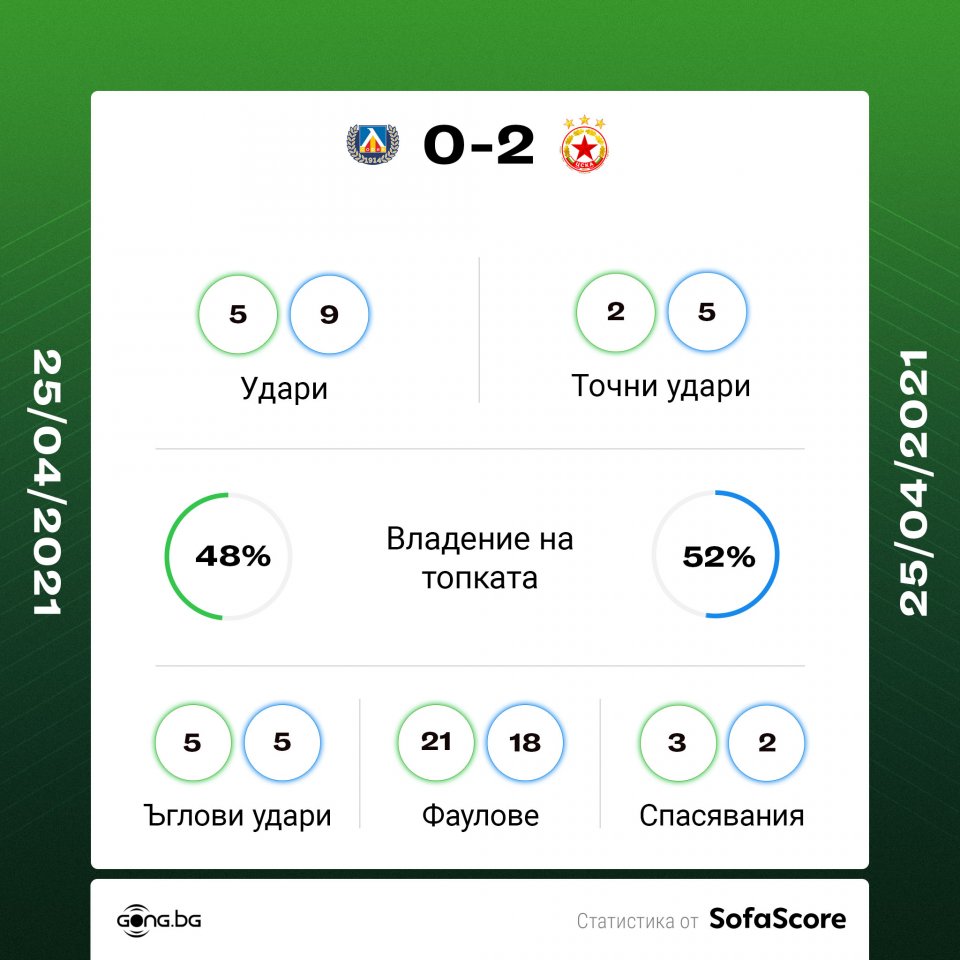

ЦСКА 1948/ CSKA 1948 - Краен резултат на двубоя от 21-вия кръг на efbet Лига между ЦСКА и Левски. #CSKA1948 #РОДЕНИВСОФИЯ #efbetLeague | פייסבוק